Teachers Aren’t Resisting AI. They’re Protecting Outcomes.

I made this mistake for years.

When training teachers to design microschools in their districts, I’d walk through the entire day: Launch. Core Skills. Projects. 1-1 check-ins. Close.

Logical, right? But they were uneasy. They didn’t trust the academics yet. So they’d quietly turn the morning Launch back into a direct-instruction lesson. They were scared not to “teach the content.”

So they squeezed it back in — during what was supposed to be a warm-up. That’s when I realized: Don’t start at the beginning of the day. Start by designing the 9am–noon academic block. That switch unlocked the entire design session.

When we design the 9am–noon academic block — what many Guide models (including @actonacademy and including and @AlphaSchoolATX) call Core Skills — I tell teachers to pressure-test every AI-powered app with five questions. If the edtech can’t answer them, it doesn’t make the cut. And here’s the surprising part: It’s 2026. It is still shockingly hard to find tools that pass this test. Here’s the first one.

- What benchmark are you building toward? Before you pick an app, decide what “success” means. For us, that usually means: NWEA MAP for growth AP exams in high school. If students can’t demonstrate progress on a credible, third-party assessment, confidence erodes. Parents worry. Teachers revert to conventional instruction. And it becomes much harder to justify the grades on the transcripts your startup distributes.

- Does the app actually prepare students to perform on that benchmark? Not “does it feel generally rigorous?” Not “does it have good reviews?” Not “do the kids like it?” But will it move performance on the benchmark test you selected and are using as your yardstick? If your benchmark is MAP, does the app align with the same standards MAP uses? If it’s AP, is the app designed to get your students a 5? If the app lives in its own internal scoring system and never connects to external proof, it's likely to waste students' time.

- Does the app diagnose where a student is right now — and build a personalized skill plan from there? This is where AI really matters. Can the app identify knowledge gaps? Can it generate a skill plan customized to that student — not the average 5th grader, not the pacing guide, not the class? If Daniel is two years behind in fractions, does the app find the exact missing pieces and build upward from there? Or does it just drop him into “Grade 5 Math” and hope? Actual personalization starts with diagnosis. Without that, you’re just digitizing whole-group instruction.

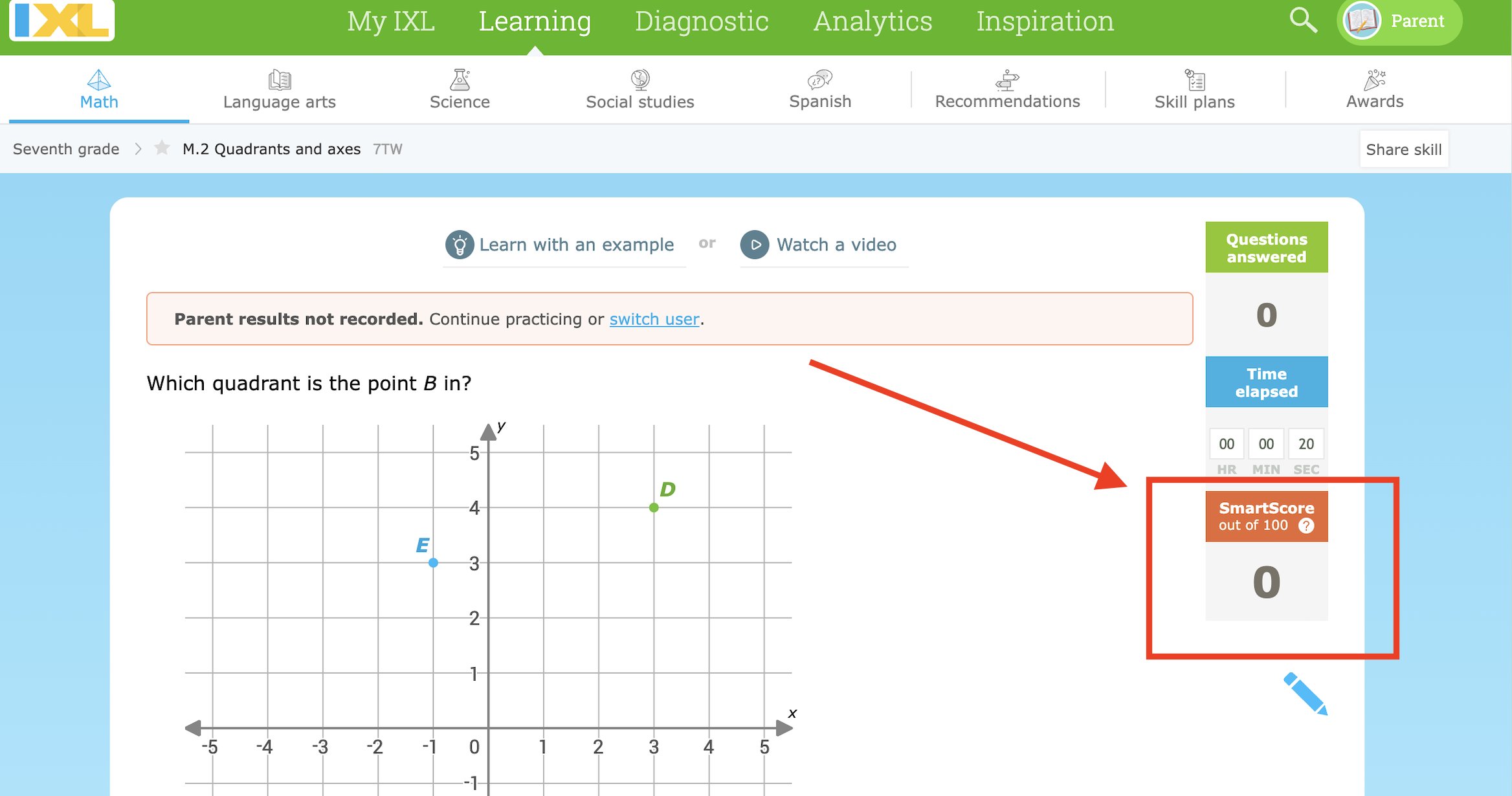

- What’s the daily win? Does the app make it obvious what the student’s personal goal is for the morning — and whether they hit it? A tip: avoid minutes. “18 minutes on Lexia” is not a goal. A student can sit there for 18 minutes and earn a checkmark. Time ≠ progress. Look for a metric that proves the student made real gains during those 18 minutes — in their personal challenge zone. And make sure students can see it. It should be visible on their screen like a speedometer. IXL does this well. The SmartScore only climbs when students answer with 80%+ accuracy. That’s the kind of empowerment and clarity students need.

- How strong is the teaching? Does the app provide solid direct instruction? If the student gets stuck, what happens? Another video or explanation? A diagram? A hint? A different example? Or does it just give them another problem — and hope repetition clears the confusion? Clear, direct instruction — the “teach piece” — matters more than people think. We’ve seen students choose the boring interface time and again because the explanation actually made sense. Clear teaching beats flashy graphics.

These five questions aren’t the only criteria. But in my experience, they eliminate about 95% of edtech immediately. No benchmark test alignment. No real diagnosis and personalized skill plans. No student-facing daily goal. Insufficient instructional videos and explanations. If an app can’t clear those bars, it won't work for student-driven learning in a microschool. And honestly, this is a call to edtech builders. If we want kids owning their learning, we need tools that are:

- Optimized for students to crush reliable 3rd-party tests

- Actually adaptive - Transparent about progress in a way kids can understand

- Strong on explanation

Teachers aren't necessarily resisting disruptive innovation or AI for instruction. They’re protecting academic outcomes. Build tools that protect both.

P.S. @lexiacore5 I will love you forevs if you read this post before we meet today.

7 Truths about computers in K-12 classrooms

Using phones and tablets for instruction can lead to worse results. Grab this free 3-pager with seven truths about devices in schools.

When you sign up, I'll send you weekly emails with additional free content.